Google Releases Gemma 3!

by Satish Yenumula

Google’s Gemma 3 Announcement

Gemma3 Technical Report

Google has just unveiled Gemma 3, its latest advancement in the world of open-weight AI models, raising the bar for both efficiency and performance in the LLM space. This release brings significant improvements in reasoning, multilingual capabilities, and fine-tuning flexibility.

More Experiments with Gemma3 to be shared in next part!

What’s New in Gemma 3?

Gemma 3 introduces several key updates designed to enhance usability and performance. Here’s what makes it stand out:

1. Smarter and More Efficient

Google has optimized Gemma 3 for both cloud and on-device applications, making it more accessible across different platforms. The model delivers faster inference times and improved energy efficiency, which is crucial for enterprise and edge AI applications.

2. Multimodal Capabilities

Unlike its predecessor, Gemma 3 extends beyond text-based reasoning and supports multimodal interactions, allowing it to process and generate insights from images, videos, and even structured data. This opens new possibilities for industries like healthcare, autonomous systems, and content generation.

3. Stronger Alignment and Safety Features

AI safety remains a top priority for Google, and Gemma 3 comes equipped with enhanced guardrails, reducing biases and improving its ability to follow ethical AI principles. The model has been rigorously tested to minimize hallucinations and ensure reliable responses across diverse scenarios.

4. Open-Weight Flexibility

Gemma 3 follows the trend of open-weight models, allowing developers to fine-tune and integrate it into custom workflows. This provides a huge advantage for research and industry applications where domain-specific training is needed.

Performance Comparison: Gemma 3 vs. Previous Models

To better understand the improvements, here is a performance comparison of Gemma 3 against its predecessor and competing models:

| Feature | Gemma 3 | Gemma 2 | GPT-4 | Llama 3 |

|---|---|---|---|---|

| Multimodal Support | ✅ Yes | ❌ No | ✅ Yes | ✅ Yes |

| Energy Efficiency | 🔋 High | ⚡ Medium | 🔋 High | ⚡ Medium |

| Fine-Tuning Flexibility | ✅ Open | ✅ Open | ❌ Limited | ✅ Open |

| Bias Reduction | ✅ Improved | ⚠️ Moderate | ✅ Advanced | ✅ Advanced |

| Real-Time Processing | ⚡ Faster | 🚀 Standard | 🚀 Standard | ⚡ Faster |

Why Gemma 3 Matters?

One of the biggest advantages of Gemma 3 is that it is designed to be run on-premise, offering greater flexibility for experimentation and deployment. Unlike cloud-dependent models that require API calls, Gemma 3 allows enterprises and researchers to host, fine-tune, and experiment with the model in their own infrastructure. This makes it a preferred choice for organizations that require data privacy, regulatory compliance, or custom domain-specific optimizations.

Google is making AI development more accessible and customizable.

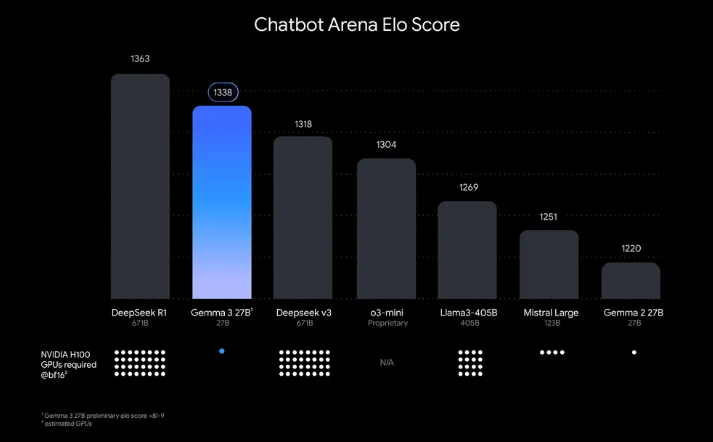

This chart ranks AI models by Chatbot Arena Elo scores; higher scores (top numbers) indicate greater user preference. Dots show estimated NVIDIA H100 GPU requirements. Gemma 3 27B ranks highly, requiring only a single GPU despite others needing up to 32.

How to Get Started with Gemma 3

Developers can explore Gemma 3 through Google’s AI platform, and weights are available for fine-tuning on various machine learning frameworks. Google has also provided detailed developer resources and API access for those looking to integrate the model into their applications.

Final Thoughts

With Gemma 3, Google is pushing the boundaries of AI accessibility, making high-performance models available for more developers, researchers, and businesses. As the AI space continues to evolve, innovations like this will shape the future of how we interact with intelligent systems.

What are your thoughts — will you be testing Gemma 3 in your projects?

tags: AI - ML - Gemma - Google